Realtime mobile object detector in Xamarin.Android

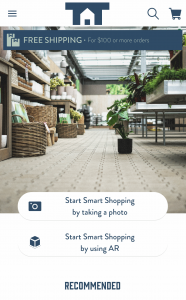

Last year the Xamarin team participated in the elaboration of TailwindTraders, a set of reference samples for Microsoft Build.

In particular, we were responsible for the demos of Xamarin Forms, showcasing the main features of Forms, specifically Shell and much more.

TailwindTraders is a fictitious brand of a DIY shop that is dedicated to the physical sale of tools for work, gardening, etc.

One of the parts of the application included an AR experience where pictures from the rear camera of an Android or iPhone were taken in real time to identify and get the details of a product and make purchase recommendations.

On Android, we used the Xamarin Binding of com.hardware.camera2 to show the preview of the rear camera, and a custom version of EmguTF to perform object detection on three objects that we agreed to detect in order to show to the user some characteristics of the object and make recommendations to customers.

Initially we agreed to identify three objects, but being a demo app we ended up using only one of them. A white hardhat.

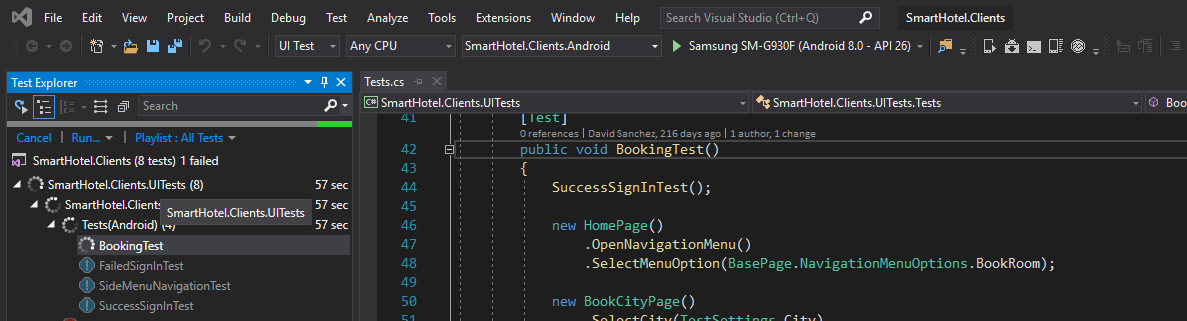

In this article we will present a sample project CameraTF in Xamarin.Android that makes use of the object detection model of white hardhats from TailwindTraders with a didactic purpose.

Let’s begin 😀

The heart of this app is an offline deep learning model. We needed to priorize inference speed over accuracy and be able to execute the process in a mobile device. That’s the reason why we decided to use Tensorflow Lite.

EmguTF is a C# binding for Tensorflow Lite. Tensorflow Lite is designed for Mobile and IoT, it is a C++ library that allows you to parse a serialized deep learning model from Flatbuffer and perform inference using the Interpreter class.

The NetStandard project Emgu.TF.Lite is a simplified version of EmguTF that expose an Interpreter class in C#, so providing the input tensors of the model, you obtain the output tensors.

A SSD MobileNet model was used. We did transfer learning over this one: ssd_mobilenet_v1_0.75_depth_300x300_coco14_sync_2018_07_03 and used this pipeline config to train it in Google Cloud TPUs. The training was fast and relatively easy to perform.

For more information about this process you can have a look at this awesome post.

Once we got the hardhat dataset ready and finished the training, we obtain the file hardhat_detect.tflite and hardhat_labels_list.txt.

In this sample we have used Xamarin Binding for com.hardware.camera to facilitate the understanding of the setup code of the camera and to obtain every frame for its further processing.

CameraTF consists of an Android Activity that presents a CameraSurfaceView with the preview of the rear camera. To get each frame of the camera we decided to use FastAndroidCamera.

With this library, in the different tested devices (Nokia 6.1, Google Pixel XL, LG G4), in the callback of OnPreviewFrame method of our INonMarshalingPreviewCallback we get about 30 fps to proceed with the analysis and a preview within our CameraSurfaceView with a more than acceptable smoothness.

The class CameraController basically controls the initialization of the camera, configures the format of the frames of the preview to Nv21, establishes a range of fps and an acceptable resolution with SetPreviewFpsRange and SetPreviewSize.

One of the most important steps to approach real-time processing is to convert NV21 (YUV420sp) to RGB in native code.

For more details take a look at the file YuvHelper y yuv2rgb.cc

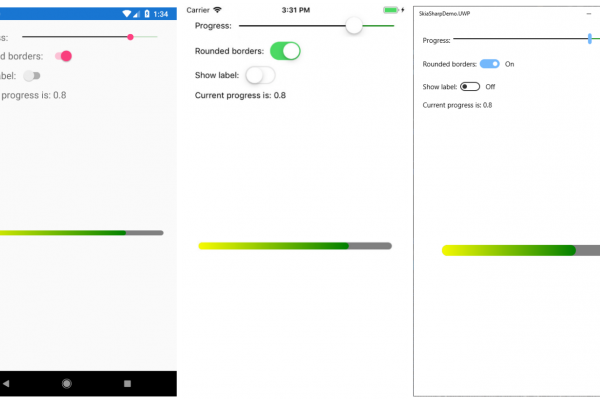

In CameraAnalyzer class you can easily track the different stages through which you pass to generate the input tensor. Once we get the frame in RGB, we apply a scaling operation to suit the input size of the SSD mobilenet tensor (300×300) and a rotation to undo the orientation of the camera. All operations on these frames are executed using SkiaSharp, to take advantage again of the performance offered by the treatment of images using native code.

The final step is to initialize the RGB colors in the input tensor, invoke the interpreter and obtain the output tensors. This model returns 4 output tensors as floating arrays for each invocation.

The first one contains the bounding boxes of the detected entities, the second is a index to the file hardhat_labels_list.txt, to know the class of the identified object, the third is a percentage of level of confidence for each of the objects and the fourth is the number of identified objects.

This is a screen recording from the actual app running in a Pixel XL.

As you see in the gif we are getting roughly 7 fps in the processingTask inside the CameraAnalyzer class.

The project is completely open for PR and improvements, feel free to collaborate and write issues!